There's a thread on r/ClaudeCode with the best title: "Convince me that agent teams are not pointless." There's plenty of chatter there, because its a very valid question.

Honestly? Claude agent teams are pointless for most things. Agent teams cost 3–4x the tokens of a single session. They add real coordination overhead. For a bug fix, a refactor, a new component — they're the wrong tool and you'll come out slower and poorer.

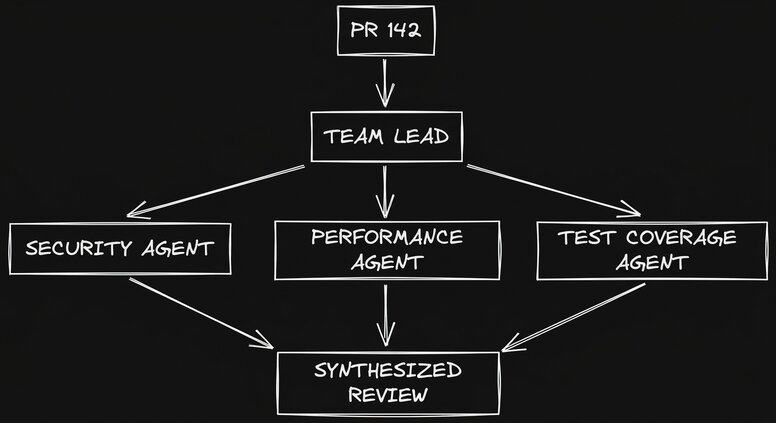

But for the specific cases where they shine, nothing else gets close. A parallel code review where three agents investigate security, performance, and test coverage simultaneously, each staying uncontaminated by the others' findings. Five agents pursuing competing theories on a gnarly bug, each pushing back on the others. Frontend, backend, and tests all moving at once without waiting on each other. These are genuinely different from what you can do in a single session.

If you just want to see what multi-agent development looks like at full scale — 20+ agents running in parallel cloud containers, each with its own dev environment and browser preview, your PM verifying UI changes directly in the live branch — that's Builder.io. Try it free. This article is about the local version of that idea, and exactly when it's worth the overhead.

What agent teams actually are

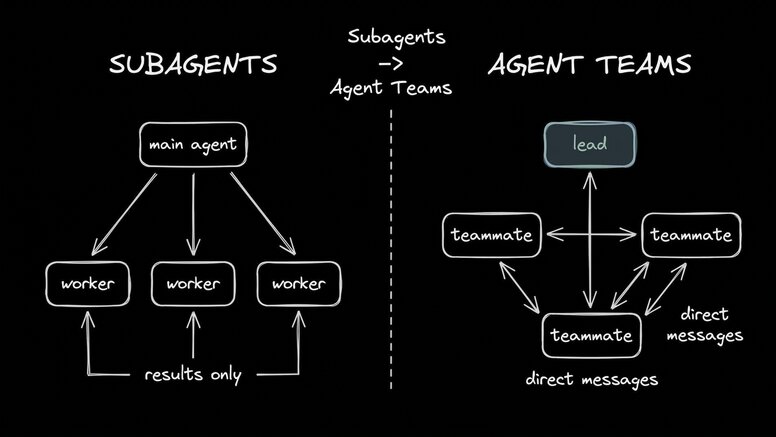

The short version: one lead, multiple teammates, all coordinating on shared work.

The lead is your main Claude Code session. It creates a task list, spawns teammates, assigns work, and synthesizes results. Each teammate is a fully independent Claude Code instance — its own context window, its own instructions, its own terminal pane if you're using tmux. They communicate by messaging each other directly through a shared mailbox system: sending a message appends JSON to an inbox file, claiming a task updates a task file. It's AI collaboration designed like software, not chat.

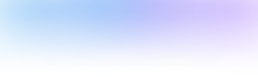

The thing that separates agent teams from subagents is that word: communication. Subagents do work and report results back to the main agent. That's it. Teammates message each other, challenge each other's findings, share what they've learned, and coordinate without you managing every handoff. As Dára Sobaloju put it: "Subagents are function calls. Agent teams are organizations."

That distinction is what makes them powerful — and also what makes them overkill for most tasks.

One important thing to know: there's no /resume for agent teams. Session dies, team is gone. Plan accordingly.

The honest signal/noise filter

No article actually answers this clearly, so here it is.

Green — this is where agent teams earn their cost:

- Parallel code review. Security, performance, and test coverage reviewed simultaneously by separate agents. The reason this works better than one agent doing all three: bias isolation. The first thing a single agent finds anchors its whole review. Separate agents each start clean. The lead synthesizes after.

- Competing hypothesis debugging. You have five theories about why something is broken. You spawn five agents, each pursuing one, each trying to disprove the others. Context bias is the real enemy in debugging — one agent finds evidence for Theory A and stops looking hard at B through E. Separate agents protect each theory from that contamination.

- Cross-layer features. Frontend, backend, and tests each owned by a different teammate, moving in parallel. No waiting. Each agent stays in its domain.

- Large read-only exploration. Investigation, research, context gathering — tasks where agents only read never create file conflicts. Great starting point if you're new to this.

Yellow — might work, depends on how you structure it:

- Multi-file refactors with clean domain separation (works well if you can guarantee no file overlap)

- Writing pipelines: research, draft, and edit running concurrently (the coordination overhead is worth it at scale, not so much for a single article)

Red — don't use agent teams:

- Sequential tasks with heavy dependencies (one thing has to happen before the next — just use subagents or a single session)

- Same-file edits (merge conflicts guaranteed, no exceptions)

- Anything small enough to finish in one session (the overhead doesn't pay off)

- Cost-sensitive workflows (each teammate is a full context window; a 4-teammate team can run through tokens fast)

The official docs say it plainly: "Agent teams work best when teammates can operate independently." If your tasks are tightly coupled, don't pay for a team.

The management mindset (what nobody actually teaches you)

Everyone says "you're a tech lead now." Here's what that actually means.

Enable delegate mode. Hit Shift+Tab to toggle it. The most common rookie mistake is leaving the lead in its default mode, where it writes code instead of coordinating. With delegate mode on, the lead plans and delegates. Without it, you've paid for a team and your most expensive agent is doing junior engineer work.

Brief teammates like it's their first day. Teammates don't inherit your conversation history. They load your CLAUDE.md, your MCP servers, and the spawn prompt you give them. That's it. If you spent an hour building up context about the codebase in your main session, none of it carries over. Be explicit: project structure, relevant files, coding conventions, the specific goal. Skimping on the spawn prompt is the most common reason results are mediocre.

Enforce domain separation. Frontend agent works in /frontend. Backend agent works in /backend. Two agents touching the same file is a merge conflict waiting to happen. This is non-negotiable. If you can't cleanly separate the domains, don't use a team.

5–6 tasks per teammate max. Don't dump 20 things on one agent. Break work into self-contained units with clear deliverables, kick them off one at a time, bring the teammate back. A lot of the best practices for managing real engineers apply directly here.

Monitor actively, don't set and forget. If you let a team run unguided for too long, agents go off the rails and you waste tokens. The split-pane tmux view makes this much easier — you can see all your agents working simultaneously. Watch for the lead starting to implement things itself instead of delegating. When that happens, tell it: "Wait for your teammates to complete their tasks before proceeding."

Start with read-only tasks. If you're new to this, lean toward investigation and research first. No file writes means no conflicts. Once you're comfortable with the coordination mechanics, move to implementation.

You're not prompting an AI anymore. You're running sprint planning.

Setup in 90 seconds

1. Enable the flag

Add this to your ~/.claude/settings.json:

{

"env": {

"CLAUDE_CODE_EXPERIMENTAL_AGENT_TEAMS": "1"

}

}Restart Claude Code.

2. Install tmux (strongly recommended)

brew install tmux # macOSThat's it. Claude creates the team, spawns the teammates, and starts coordinating. You guide from there.

The real use cases (with actual prompts)

Parallel code review

This is the best entry point into agent teams. The prompt is simple, the value is immediate, and the risk of file conflicts is zero (everyone's reading, nobody's writing).

Create an agent team to review PR #142. Spawn three reviewers:

- One focused on security implications and vulnerability patterns

- One checking performance impact and potential bottlenecks

- One validating test coverage and edge cases

Have each reviewer go deep in their lane. Then combine into one report

with severity ratings.

Why this works better than asking one agent to do all three: the first agent to start anchors the entire review. If the security reviewer finds a bad token handling issue, a single-agent review starts gravitating toward security concerns and loses objectivity on performance. Separate agents each start fresh. The lead gets three clean perspectives and synthesizes.

Competing hypothesis debugging

This one's underused and genuinely novel.

Users report the dashboard crashes after the second data refresh. I have

five theories:

1. Race condition in the state update

2. Memory leak from uncleaned event listeners

3. WebSocket reconnect flooding the connection pool

4. Stale closure capturing an outdated reference

5. Response parsing failing on an edge case in the data shape

Spawn 5 agent teammates to investigate these simultaneously. Have them

actively try to disprove each other's theories — like a scientific debate.

Compile the surviving evidence into a findings doc.The "actively disprove each other" instruction matters. Without it, agents will confirm their theory and stop. With it, you get actual falsification. The theory that survives is much more likely to be the real root cause.

For context on what's possible at scale: Anthropic built a C compiler using 16 Claude agents across roughly 2,000 sessions, consuming about 2 billion input tokens, at a cost under $20K. That's the ceiling of the technique — not a practical day-to-day workflow, but a useful reminder of what this architecture can do when the problem genuinely warrants it.

The ceiling — and what's beyond it

Here's what you run into as you push agent teams further:

3–5 teammates is the practical limit. Beyond that, coordination overhead starts eating the gains. The communication, the context loading, the lead trying to track everyone — it gets messy fast.

Sessions are ephemeral. Team dies with the session. No resume, no rewind. If you're mid-task and Claude crashes, the team is gone. Plan for this.

Your machine pays the price. Each agent is a full Claude Code instance. Five teammates means five context windows running simultaneously. On a laptop, you'll feel it — RAM, CPU, fans running hard.

No browser preview. You can monitor agents in terminal panes, but there's no way to verify UI changes in a live browser without switching out of the workflow. Iteration on visual changes is awkward.

No team collaboration. It's just you and the terminal. Your PM can't pop into a branch and verify the output. Your designer can't adjust the responsive behavior. You're still the only human in the loop.

Builder.io is what agent teams look like when you move them to the cloud. Instead of 3–5 teammates on your laptop, you get 20+ agents in parallel containers — each with its own full dev environment and browser preview. Your PM can verify UI changes directly in the live branch. Your designer can tweak the responsive behavior without a Figma handoff. You review a fully-validated PR, not raw code output that needs manual QA. The concept is the same. The ceiling is much higher.

If agent teams gave you a taste of what multi-agent development feels like, Builder.io is where that idea scales. Try it free.

FAQ

Are agent teams worth it?

For the right tasks, yes — parallel code review and competing hypothesis debugging in particular. For everything else, probably not. The token cost and coordination overhead are real. Use the Green/Yellow/Red filter above.

When should I use agent teams vs. subagents?

Use subagents when workers just need to do isolated tasks and report back. Use agent teams when workers need to share findings, challenge each other, and coordinate without you managing every message. If they don't need to talk to each other, subagents are cheaper and simpler.

How many teammates should I use?

Start with 3. Most workflows don't need more than 4–5. Start small, add teammates only when you can cleanly separate the domains and you're confident the parallel work pays off.

How much do agent teams cost?

Plan on 3–4x the tokens of a single session for a typical team. Each teammate has its own full context window. A 4-teammate team loading the same project context is 4x the initialization cost before any actual work happens. For research, review, and complex cross-layer features, the extra tokens are usually worth it. For anything that a single session can handle in one pass, stick with a single session.